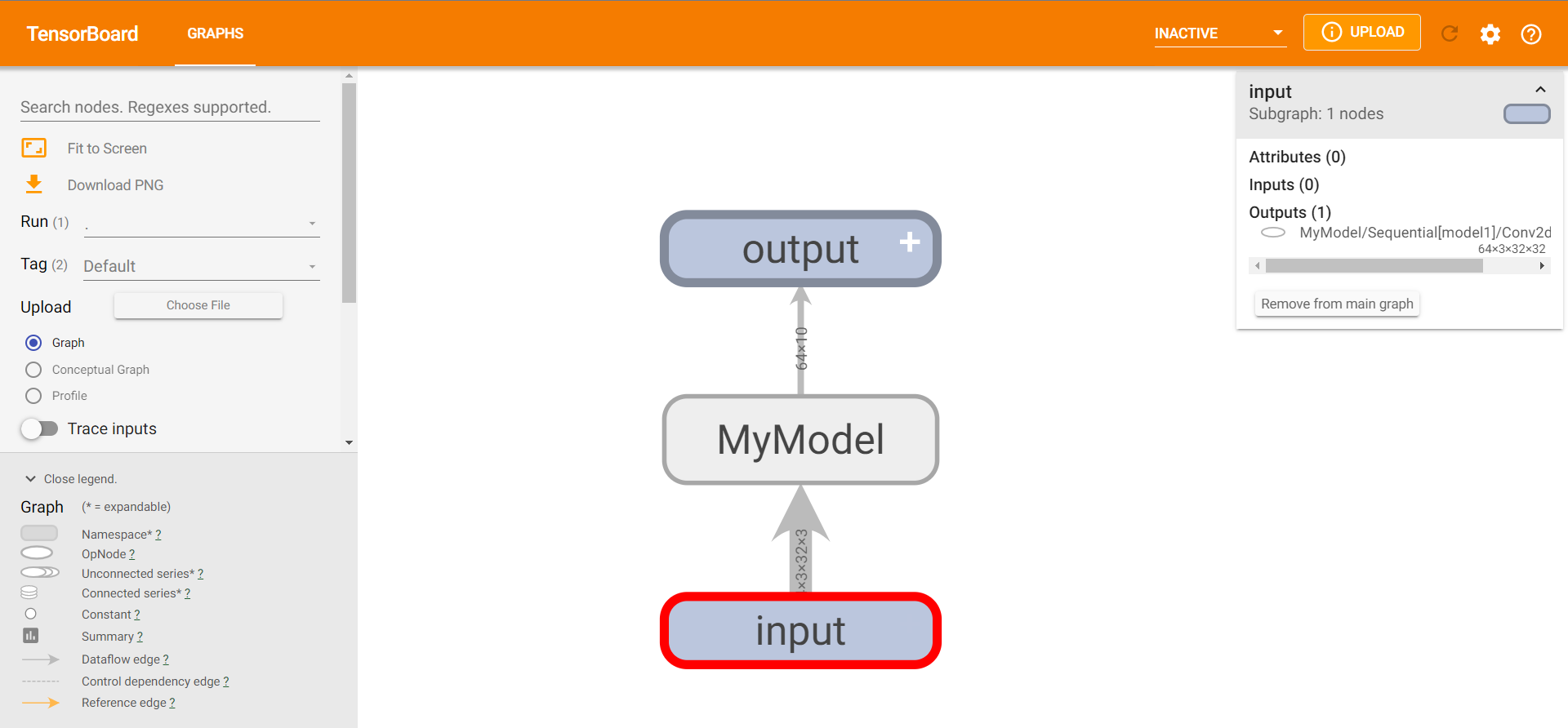

Loading... # pytorch学习4 ## 神经网络 ### nn.Sequential搭建实战 模块将按照它们在构造函数中传递的顺序添加到其中  **未使用Sequential** ~~~python from torch import nn from torch.nn import Conv2d, MaxPool2d, Flatten, Linear class MyModel(nn.Module): def __init__(self): super(MyModel, self).__init__() self.conv1 = Conv2d(3, 32, 5, padding=2) self.maxpool1 = MaxPool2d(2) self.conv2 = Conv2d(32, 32, 5, padding=2) self.maxpool2 = MaxPool2d(2) self.conv3 = Conv2d(32, 64, 5, padding=2) self.maxpool3 = MaxPool2d(2) self.flatten = Flatten() self.linear1 = Linear(1024, 64) self.linear2 = Linear(64, 10) def forward(self, x): x = self.conv1(x) x = self.maxpool1(x) x = self.conv2(x) x = self.maxpool2(x) x = self.conv3(x) x = self.maxpool3(x) x = self.flatten(x) x = self.linear1(x) x = self.linear2(x) test = MyModel() print(test) ~~~ 输出 ~~~ MyModel( (conv1): Conv2d(3, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (maxpool1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False) (conv2): Conv2d(32, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (maxpool2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False) (conv3): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (maxpool3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False) (flatten): Flatten(start_dim=1, end_dim=-1) (linear1): Linear(in_features=1024, out_features=64, bias=True) (linear2): Linear(in_features=64, out_features=10, bias=True) ) ~~~ 测试: ~~~python input = torch.ones((64, 3, 32, 32)) output = test(input) print(output.shape) ~~~ 输出 ~~~ torch.Size([64, 10]) ~~~ **使用Sequential** 修改自定义MyModel类 ~~~python class MyModel(nn.Module): def __init__(self): super(MyModel, self).__init__() self.model1 = Sequential( Conv2d(3, 32, 5, padding=2), MaxPool2d(2), Conv2d(32, 32, 5, padding=2), MaxPool2d(2), Conv2d(32, 64, 5, padding=2), MaxPool2d(2), Flatten(), Linear(1024, 64), Linear(64, 10) ) def forward(self, x): x = self.model1(x) return x ~~~ 输出(和之前一样,代码更简洁) ~~~ MyModel( (model1): Sequential( (0): Conv2d(3, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False) (2): Conv2d(32, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False) (4): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (5): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False) (6): Flatten(start_dim=1, end_dim=-1) (7): Linear(in_features=1024, out_features=64, bias=True) (8): Linear(in_features=64, out_features=10, bias=True) ) ) torch.Size([64, 10]) ~~~ 查看模型示意图(双击可以查看具体模型处理步骤) ~~~python writer = SummaryWriter("logs_seq") writer.add_graph(test, input) writer.close() ~~~  ### 损失函数和反向传播 **L1Loss**():计算输入和目标之间的绝对误差,参数reduction有none、mean、sum ~~~python import torch from torch.nn import L1Loss inputs = torch.tensor([1, 1, 3], dtype=torch.float32) targets = torch.tensor([1, 2, 5], dtype=torch.float32) inputs = torch.reshape(inputs, (1, 1, 1, 3)) targets = torch.reshape(targets, (1, 1, 1, 3)) loss = L1Loss(reduction='none') result = loss(inputs, targets) print(result) ~~~ 输出(none时相当于每个求差值并显示) ~~~ tensor([[[[0., 1., 2.]]]]) ~~~ reduction='**mean**':tensor(0.6667)(相当于计算差值和并求平均) reduction='**sum**':tensor(2.)(相当于计算差值和) **MSELoss**():计算均方误差,参数reduction通L1Loss 案例同上 ~~~python loss = MSELoss(reduction='sum') result = loss(inputs, targets) print(result) ~~~ 输出:tensor(5.) ~~~python x = torch.tensor([0.1, 0.2, 0.3]) y = torch.tensor([1]) x = torch.reshape(x, (1, 3)) loss_cross = CrossEntropyLoss() result_loss = loss_cross(x, y) print(result_loss) ~~~ 输出: ~~~ tensor(1.1019) ~~~ 最后修改:2022 年 08 月 19 日 © 允许规范转载 打赏 赞赏作者 支付宝微信 赞 如果觉得我的文章对你有用,请随意赞赏